Learning to Work With AI Video Tools: A Beginner’s Honest Guide to Getting Started

When I first opened an AI video generator, I expected magic. Type a prompt, get a polished video. That’s not quite how it went.

What actually happened was a series of small surprises — some outputs looked impressive, others missed the mark entirely. Over several weeks of experimentation, I started understanding what these tools can realistically do and, more importantly, how to work with them rather than expecting them to work for me automatically.

This guide is for anyone standing at that same starting point. If you’re curious about AI-powered video and image creation but unsure what the experience actually looks like, here’s what I’ve learned through hands-on use.

What Newcomers Usually Expect (And What Actually Happens)

Most beginners approach an AI video generator with one of two assumptions: either it will instantly replace their entire production workflow, or it’s just a gimmick that produces unusable content.

Neither is accurate.

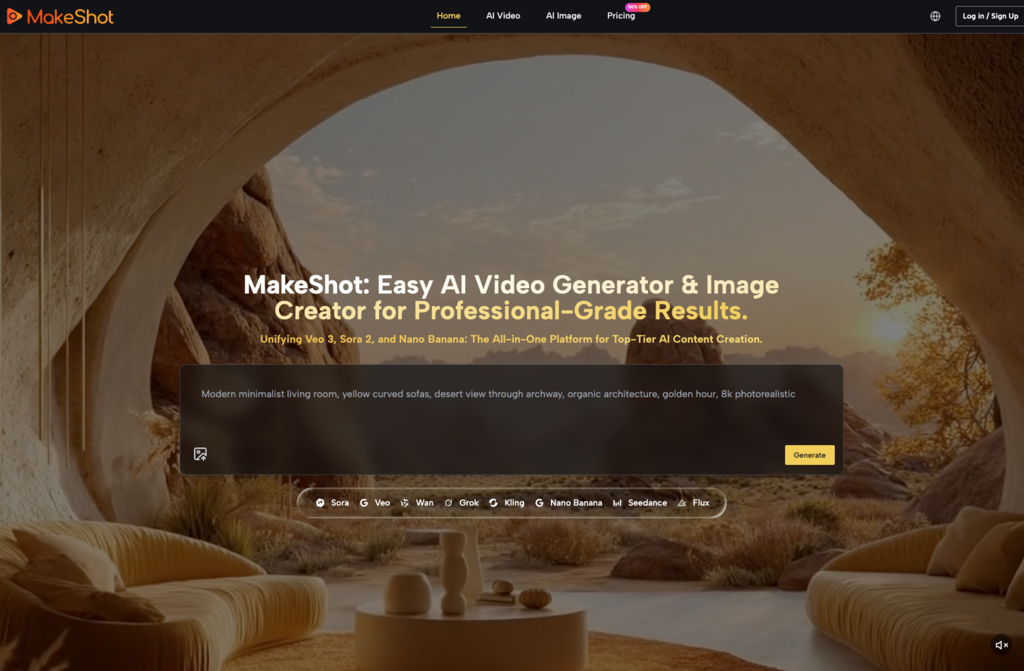

The reality sits somewhere in between. Modern platforms like MakeShot — which brings together models like Veo 3, Sora 2, and Nano Banana Pro under one interface — can produce genuinely useful output. But “useful” depends heavily on how you frame your requests and what you’re trying to accomplish.

Here’s what early experimentation typically looks like:

- Your first few prompts produce results that feel random or off-target

- You start noticing which word choices influence output quality

- Certain types of content (product shots, simple animations) work better than others initially

- You gradually develop a mental model of what each tool handles well

This learning curve isn’t a flaw — it’s the nature of working with generative systems. The sooner you accept that iteration is part of the process, the faster you’ll start getting results you can actually use.

Common Misunderstandings That Slow People Down

“The AI Should Understand What I Mean”

This is the biggest adjustment for most beginners. An AI image creator doesn’t read your mind. It interprets your words literally, sometimes in unexpected ways.

Writing “a professional business video” gives the system almost nothing to work with. Writing “a 30-second product demonstration showing a smartphone rotating against a white background with soft lighting” gives it something concrete.

Specificity isn’t optional — it’s the primary skill you’re developing.

“One Generation Should Be Enough”

Professional creators using these tools rarely accept the first output. They generate multiple variations, compare results, and refine their prompts based on what works.

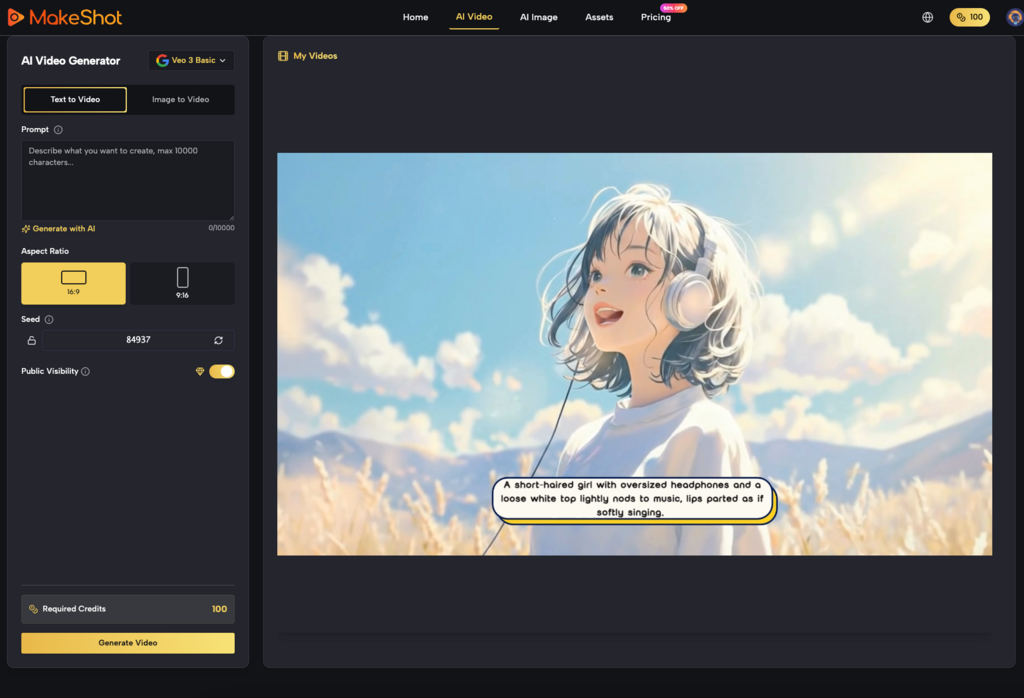

Platforms that let you compare across different models — like testing the same concept in Veo 3 versus Sora 2 — accelerate this learning process significantly. Each model has different strengths, and discovering those differences through direct comparison teaches you faster than reading documentation.

“AI Replaces the Creative Process”

It doesn’t. It changes the creative process.

Instead of spending hours on manual production tasks, you spend time on prompt refinement, output selection, and integration into your broader workflow. The time investment shifts rather than disappears.

How Workflows Actually Change Over Time

Day 1: Exploration

Everything feels experimental. You’re testing random prompts, seeing what different models produce, and building intuition about capabilities and limitations. Most output during this phase won’t be usable for real projects — and that’s fine.

Day 2 to 4: Pattern Recognition

You start noticing what works. Certain prompt structures consistently produce better results. You develop preferences for specific models based on your content needs. An AI video generator like Veo 3 might become your go-to for content needing synchronized audio, while Nano Banana Pro handles your product visualization needs.

Day 5 and Beyond: Integration

The tool becomes part of your regular workflow rather than a separate experiment. You know when to use it and when traditional methods make more sense. Generation time becomes predictable, and you’ve built templates for common content types.

This progression isn’t instant. Expecting to skip straight to integration without the exploration phase leads to frustration.

Practical Considerations for Early Adopters

Start With Low-Stakes Projects

Don’t test an AI image creator on your most important client project. Begin with internal content, social media experiments, or personal projects where imperfect results don’t carry consequences.

This gives you room to learn without pressure.

Document What Works

Keep notes on successful prompts. When something produces great output, save the exact wording. Over time, this becomes a personal reference library that dramatically speeds up future projects.

Understand Model Differences

Not all AI video generators perform identically. Sora 2 might excel at cinematic storytelling while Veo 3’s native audio generation saves hours of post-production work. Nano Banana Pro’s support for multiple reference images makes it valuable for maintaining character consistency across a series.

Learning these distinctions — through actual use, not just reading about them — is part of the adoption process.

Addressing Common Concerns

| Concern | Reality |

| “Output quality won’t meet professional standards” | Quality varies by model and prompt. Cinema-grade output is achievable but requires learning the tools |

| “I’ll spend more time fixing AI output than creating manually” | Initially possible. Decreases significantly as you develop prompt-writing skills |

| “These tools are too expensive to experiment with” | Unified platforms reduce costs compared to multiple separate subscriptions |

| “I’ll lose creative control” | You maintain control through prompt design and output selection |

What I Wish I’d Known Earlier

Looking back at my first month with AI video and image tools, a few insights would have saved time:

Comparison accelerates learning. Running the same prompt through multiple models — possible on platforms like MakeShot that unify Veo 3, Sora 2, Nano Banana, and others — teaches you more in one session than weeks of single-model experimentation.

Reference images matter. When an AI image creator supports reference uploads, use them. They provide visual context that words alone can’t convey, leading to dramatically more consistent results.

Expectations need calibration. These tools won’t replace your entire production process overnight. They’ll handle specific tasks well, others poorly, and the boundary shifts as you learn.

Moving Forward Realistically

AI-powered content creation is genuinely useful — but it’s useful in the way any professional tool is useful. It requires learning, produces better results with practice, and works best when you understand its limitations.

If you’re considering adopting an AI video generator or AI image creator, approach it as a skill to develop rather than a switch to flip. The creators getting the most value from these tools invested time in understanding them, experimented through early uncertainty, and gradually built workflows that leverage AI strengths while compensating for weaknesses.

That process isn’t glamorous. But it’s honest — and it’s how real adoption actually works.

READ ALSO: How Influencers Use CapCut AI Writer to Stay Consistent and Creative